AI facilitates System 1 thinking and may suppress System 2 thinking.

This is a hypothesis that I have been reflecting on lately.

As Daniel Kahneman explained in 𝘛𝘩𝘪𝘯𝘬𝘪𝘯𝘨, 𝘍𝘢𝘴𝘵 𝘢𝘯𝘥 𝘚𝘭𝘰𝘸, there are two types of thinking:

𝗦𝘆𝘀𝘁𝗲𝗺 𝟭 – automatic and intuitive, but prone to mistakes

𝗦𝘆𝘀𝘁𝗲𝗺 𝟮 – deliberate and thoughtful, but requires more effort

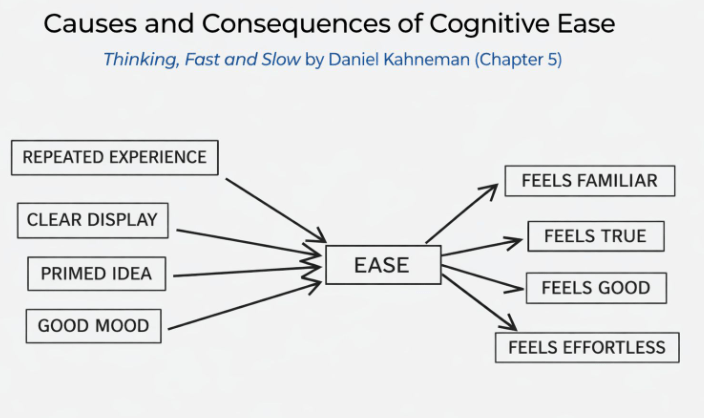

Chapter 5 in the book talks about something called cognitive ease and strain, which is the ease or difficulty with which we process things. There are certain factors which make something feel easy, as noted in the chart below, and often the opposite of these factors causes strain.

For example, the book mentions a study where students at Princeton were given some problems that had tricky wording. Half of them saw the problems in a normal font and half of them saw the problems in “a small font in washed-out gray print.”

The result: 90% of the students with the normal font made mistakes versus only 35% when the same puzzles were hard to read. The students with the difficult-to-read font did better.

The students who faced cognitive strain, from reading the more difficult-to-read print, looked at the problems more carefully and critically.

The conclusion was that cognitive strain can sometimes mobilize System 2 thinking. And the reverse may also be true: cognitive ease may lead to System 1 thinking.

𝗪𝗵𝘆 𝘁𝗵𝗶𝘀 𝗺𝗮𝘁𝘁𝗲𝗿𝘀 𝗻𝗼𝘄

My observation is that AI seems to facilitate cognitive ease. A few examples (with emphasis on the drivers):

In the AI chat interface, we ask questions of a polite and affirming personality that might put us in a GOOD MOOD.

When answering questions, the chats bring up examples from prior conversations, which effectively PRIME THE IDEA.

The results look CLEARLY DISPLAYED, with perfectly formed sentences and financial models that look like they came from an investment bank.

As more of us are judging output from AI, this is potentially a problem.

𝗛𝗼𝘄 𝘁𝗼 𝗮𝗰𝘁𝗶𝘃𝗮𝘁𝗲 𝗦𝘆𝘀𝘁𝗲𝗺 𝟮 𝘁𝗵𝗶𝗻𝗸𝗶𝗻𝗴

Interestingly, you could ask AI to apply System 2 thinking to the answer it gives you, which works even better if you use a different model provider.

However, the single easiest thing you can do is to … slow down. Take a step back, take a breath, and consciously switch to System 2 thinking. Turn into a skeptical curmudgeon with a lot of questions.

In practice, System 2 thinking is partially about asking the right types of questions. In analyzing a financial model, for example, you might ask:

Where did these assumptions come from?

Is the answer internally consistent?

What would change this conclusion?

What is missing from this analysis?

Who is AI trying to please with this answer?

The better AI gets at facilitating cognitive ease, the more deliberately we’ll need to fight for cognitive strain.

Leave a comment